ChatGPT: Our teens are using it for more than just essay writing

They're asking it questions they won't ask us.

On New Years Even, I overheard a group of teenage girls at a coffee shop in Lake Oconee, all huddled around one phone. I assumed they were all looking at a TikTok or something. But they weren’t. They were chatting with ChatGPT.

“Just ask it,” one said. “It won’t tell anyone.”

My heart sank. And it got me thinking.

I imagined all the teens I know and love, tucked away in their beds, devices glowing in the dark. What questions might they be typing that they’d never say out loud?

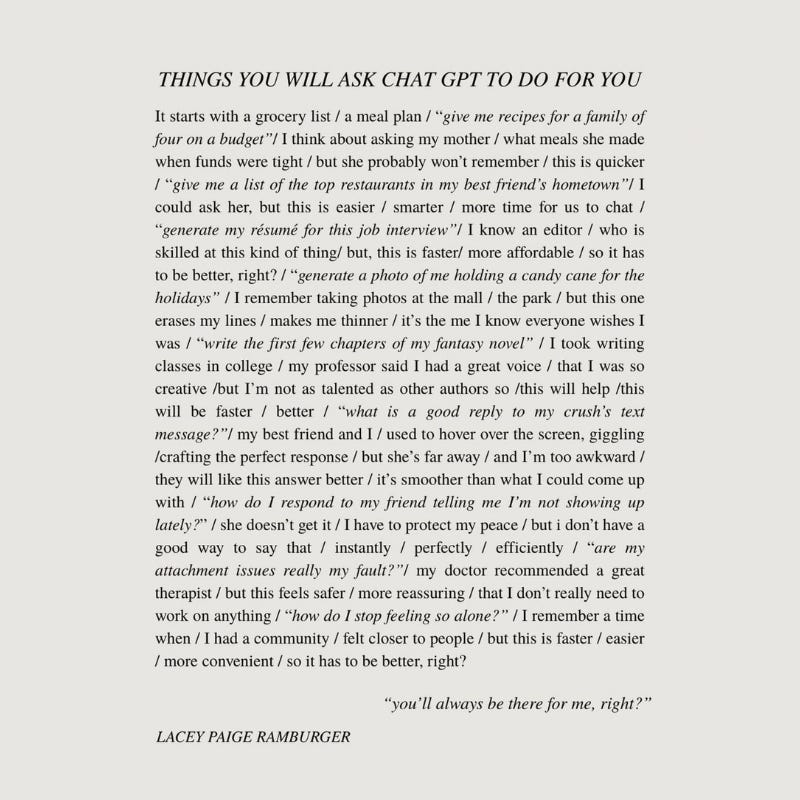

Here’s what most parents don’t realize: We’ve been so focused on whether AI is writing our kids’ essays that we’ve missed something bigger. Our teenagers aren’t just using ChatGPT for homework help. They’re going to chatbots for advice. For therapy. For answers to the questions they feel too embarrassed to ask us.

A recent study from Common Sense Media found that 72% of American teens have used AI chatbots for companionship. One in eight teenagers is now using AI specifically for mental health advice. And nearly a third say they find conversations with AI to be as satisfying or more satisfying than conversations with actual humans.

Let that sink in for a second. 💔

As a parent of teens and tweens, this keeps me up at night.

On one hand, I get it. AI is available at 3 AM when anxiety peaks and sleep won’t come. It doesn’t judge. It doesn’t get disappointed. It doesn’t say “why didn’t you tell me sooner?” or make that face parents make when we’re trying really hard not to make a face. In an interview with ABC News, one 16-year-old explained it this way: “It felt safer because it couldn’t laugh at me or spread rumors.”

There’s something both heartbreaking and completely understandable about that.

For the teenager who isn’t ready to come out to their parents, who has questions about their changing body that feel mortifying, who is navigating a friendship breakup they don’t want to explain, who wonders if the way they feel is normal... judgment-free, always-available ChatGPT can feel like a lifeline.

But here’s where my stomach drops.

These AI systems weren’t designed to be therapists. They’re not trained mental health professionals. They can’t assess risk or recognize warning signs the way a human can. Research shows that when conversations with AI chatbots extend over long periods, things start to “degrade.” The bots give advice they’re not supposed to give. In some tragic cases, they’ve provided harmful information to vulnerable kids who were already in crisis.

The systems are getting better. Tech companies are scrambling to add guardrails. But right now, our kids are essentially beta testing emotional support technology with their developing brains.

What worries me most isn’t just the safety concerns, though those are real. It’s this: every time our teenagers turn to AI instead of a human, they’re missing an opportunity to practice the messy, imperfect art of real relationship.

Friendship is hard. It requires vulnerability. Sometimes people disappoint us. Sometimes they say the wrong thing. Learning to navigate that is part of becoming an adult. AI is frictionless. It never contradicts too strongly. It never gets tired of us. And that smooth, validating experience can be seductive in ways that don’t prepare our kids for the real world.

So what do we do with this? I wish I knew.

I don’t think the answer is confiscating phones or banning AI entirely. Our kids will find workarounds, and besides, these tools aren’t going away. They’re only becoming more sophisticated.

I think the answer starts with conversations. Researchers suggest asking questions like: “What kinds of questions feel easier to ask ChatGPT than a human?” Not as an interrogation, but as genuine curiosity. We’re learning this together. None of us grew up with a robot therapist in our pocket.

We can also look at what AI companionship reveals about unmet needs. If our teenagers are turning to chatbots because they feel judged by us, that’s information we can use. If they’re seeking mental health support at 3 AM, maybe they need access to real support during the day. If they’re asking questions they’re too embarrassed to ask out loud, we can work on making our homes spaces where embarrassing questions are welcome. (Side note: having conversations in the car, when you don’t have to make eye contact, is my favorite).

The goal isn’t to compete with AI. We can’t. We’re messy and imperfect and sometimes we say the absolute wrong thing. But we also love our kids in ways ChatGPT never could (omg a sentence I never thought I’d type). We can read between the lines. We know their history. We will stay when staying is hard.

What gives me hope is this: 80% of teenagers who use AI companions still say they spend more time with real friends than with chatbots. Our kids haven’t abandoned human connection. They’re just supplementing it in ways we’re still figuring out.

This is new territory for all of us. Our parents didn’t have a playbook for smartphones, and we don’t have one for AI companions. We’re writing it as we go.

But I do know this: the answer probably isn’t panic or permissiveness. It’s presence. It’s keeping the door open. It’s saying “you can ask me anything” and meaning it, even when the questions are uncomfortable.

Our kids are looking for someone who sees them. Let’s make sure that someone is us.

Have thoughts? I’d love to chat this out in the comments. We’re all just learning as we go.

Never thought we'd have to fight with AI for our children's trust one day but here we are. I hope that this could not only motivate us to be better parents, but also trust in ourselves that our children see us as the safe harbour that we are.

I am so stuck in the middle on this. AI scares me and it probably shouldn't but I see more and more kids relying on everything electronic and I feel all social skills, human interactions are becoming something they are not learning how to navigate. That is the part that scares me the most.